Database

1993

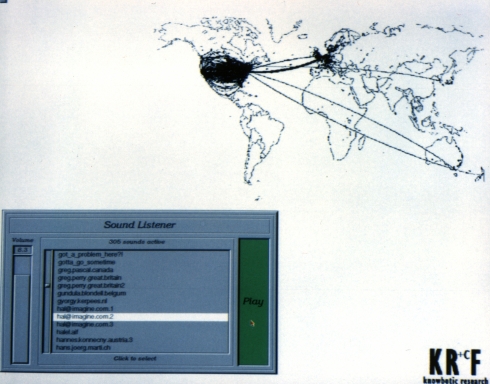

For this project, Knowbotic Research sent out an international call for participation. From all over the world, digitized sound samples in the form of data files were sent in across international computer networks. The audio information in these files was then assigned spatial and geometric characteristics, meaning that they could be given visual shapes and represented in virtual reality as objects floating in air. The active participant is equipped witb a sensor hand and a miniature eye monitor. The sensor band allows the control system to ascertain the user's exact location. This information, combined with the momentary positions of the floating sounds, allows the user's virtual point of view to be rendered and transmitted to the eyepiece monitor. In the dark, the user sees hiimself surrounded by groups of sounds which he can reach out and 'touch' and hear.

Personal statements, attitudes towards the world summed up in six second soundbites, form the raw material for this experience (the human voice, noises, music, and more). These contributions were requested in announcements on computer networks (internet, compuserve, fidonet), through targeted, world-wide flyers and personal contacts. 'smdk' is an interdisciplinary protect drawing on the exchange of working methods among media artists, computer musicians, computer scientists, and natural scientists. This interactive data space is connected to various public domain networks. The computer-generated model allows the user a physical dialog with a self-organizing data bank whose information can be accessed on various levels.

All statements are analyzed and allocated their characteristics on the basis of their discernible sound properties. They are given a corresponding shape and transformed into independently operating units, or agents. This analysis also brings about localized rules for their behavior in virtual space. They organize themselves into selfstimilar sound groups, forming an organsism that continuously restructures itself.